Usually, when we develop software we tend to use other software, which is either part of an operating system or taken from third-party libraries that implement building blocks we prefer using instead of reimplementing them from scratch.

We are the proverbial dwarves standing on the shoulders of giants and that’s usually good: better using an almost-round wheel than reinventing a squared one.

But what happens when we discover that the giant is actually drunk or the wheel we wanted to use is actually triangular?

Better to know that sooner than later and adapt accordingly.

What to look for

When you are lucky, there are already few implementations around of what you need, so you can prepare a nice comparison table with the key characteristics of each project:

Functionality:

When a software does not offer all you need, you should evaluate how much it currently supports and compare its features with the ones contained in other software. Try to separate 2-3 key features you cannot live without.

Source availability:

Usually, it is better to deal with open-source software since you can fully investigate its code, and its development practices are generally open for scrutiny as well.

Open source is a wide world, so when you start using a project, you have to note what is the license and make sure the sum of the components you are shipping is legal (see REUSE).

Maintainance status:

Some projects are upfront and mention their maintenance status, but in general you have to figure that out.

Among the key metrics to look at to understand a project’s maintenance status, we suggest:

- Release freshness;

- Source tree freshness;

- Presence and standing on a security fault tracking system, e.g., CVE;

- Average turnaround between an issue is reported and it is fixed, having many faults reported in itself is not as problematic as letting them unaddressed for a long time;

Code Quality:

The code of the library you wish to use may or may not have been thoroughly tested and validated. The same tools you should use to evaluate the quality of your code can be applied to evaluate the library you want to use.

- Test coverage

- Code complexity

- Presence of faults detected by static or dynamic analysis

The beauty of open source is that you may contribute with fixes or new features to a project as long as upstream is amicable enough.

In practice

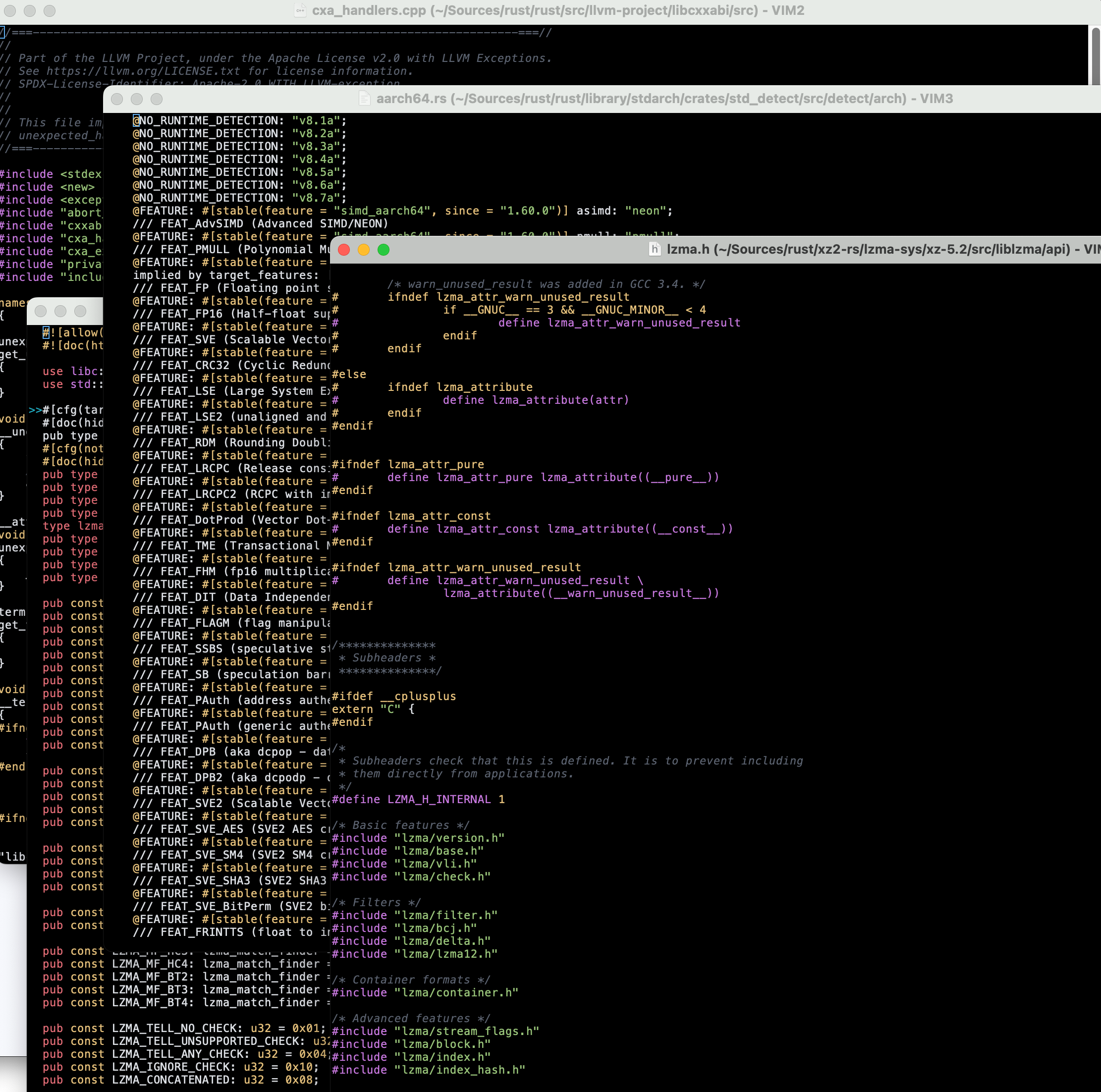

Since in SIFIS-Home we suggest the use of Rust, let’s see how to leverage its ecosystem to evaluate dependencies.

Most of the reasoning translates easily to the ecosystems of other languages, e.g., what applies to crates.io does apply to the CheeseShop Python Package Index or in broader terms to software distributions such as Nix, Homebrew, or any Linux (meta)distribution in general.

- cargo-about: Sadly, one of the first things you may want to double check is whether the software you are using is fine to distribute for your purposes. There are automatic license extractors or, if you are using distributions such as Gentoo, this feature is embedded in their package manager or distribution-enforced such as in Debian.

- cargo-audit: Once you are sure you can legally use a dependency, it is a good idea to check whether it is safe to use. cargo-audit automatically checks that your software and all its dependencies are not known to have defects. deps.rs is a service doing the same and producing a svg badge you can embed in your documentation. It helps you also assess the maintainance status of the project.

- crates.io and libs.rs: The software repositories make easy to access how popular a package is.

- complex-code-spotter and rust-code-analysis: Getting some metrics on how the code is hard to understand is usually a good idea.

- cargo-careful and miri: Even if Rust prevents some class of errors at the language level, it is a good idea to see whether the dependencies you are going to use do not have lingering issues you may spot.

Once your candidate survives the scrutiny, you may happily add it to your Cargo.toml and start using it with some peace of mind.

About the writer

Luca Barbato is a long-time Open Source contributor, member of VideoLan, Gentoo, X.org and a few other organizations. He participates in SIFIS-Home with his company, Luminem SRLs.